The use of artificial intelligence can go well beyond a search engine, lesson template, or calendar organizer—but many teachers still use AI mostly for those kinds of surface-level tasks.

As AI models advance, teachers increasingly need training not just on the basics of the programs, but on how to leverage their own professional judgment and expertise when working with AI technology.

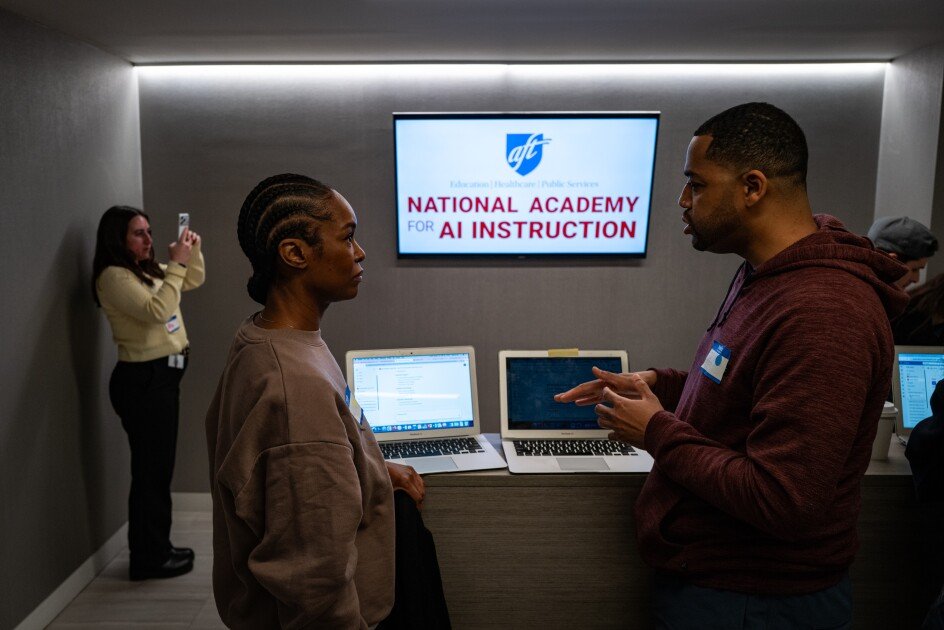

About 50 teachers came together here on March 18 to learn to develop “agentic” AI tools, autonomous software systems that can do more complex, multi-step tasks that involve an element of reasoning to support teachers across subjects, grades, or platforms.

For Jing Liang Guan, a science teacher at the Brooklyn Science and Engineering Academy, this means the difference between a tool that can create a lesson plan and one that can help him stress-test his lessons for content gaps and confusing wording, and help him hone his teaching approach over time.

The New York City training is part of the National Academy for AI Instruction, a five-year, $23 million partnership between the American Federation of Teachers and three of the largest AI developers—Anthropic, Microsoft, and OpenAI—to train 400,000 teachers on how to use the technology in the classroom. The academy uses teachers, with limited support from developers, to train other teachers in how to use AI to improve instruction.

The share of teachers using AI-run tools nearly doubled from 2024 to 2025, according to a national survey by the EdWeek Research Center; 6 in 10 teachers say they use AI in their practice, but still most often for basic lesson plans and administrative work, rather than instructional improvement.

“We’re in this race for teachers to get this knowledge” of more meaningful use of AI, said Randi Weingarten, the AFT president. “This will become the most disruptive technology in our time. … There is a real demand from educators to learn so that they are in the driver’s seat for AI as opposed to the companies or districts or the technology itself.”

AI used for real-time, problem-solving

These agentic AI tools, or agents, allow teachers to use their own professional judgment and experience to narrow the scope of information the AI is using to respond to a request, according to Seth Reznik, a team lead for Microsoft Elevate, the tech giant’s $4 billion training initiative for schools and nonprofits. This makes it less likely the AI will hallucinate—making up information—or provide responses that are facile or not based on up-to-date research, as prior studies have found AI assistants can be wont to do.

Lois Torres, a preschool paraeducator in New York City public schools, wants to develop a research-backed AI agent that can help her co-teacher and her brainstorm faster alternative approaches when a lesson or behavioral or academic intervention isn’t working.

“A lot of teachers are doing this work at home, just wracking their brains trying to figure out what’s going to work for the next day,” Torres said. “Sometimes, it takes a lot for the teacher and a para like me to figure out how to help a kid—and, then, when something doesn’t work, it’s like, OK, now we’ve got to brainstorm on the fly, what else to do?”

Similarly, Yasheema Cook is developing agents to help her create and monitor individualized education programs and differentiate lessons for the New York City 12th graders in her self-contained special education classes, who have a variety of autism, intellectual disabilities, and other needs. She said she can use students’ daily progress to adjust lessons over the week.

Cook and other teachers at the training find it a careful balancing act to provide enough context to the AI on student disabilities and other needs to get meaningful, targeted help, while also protecting student privacy. New York City public schools has not yet released formal AI use guidance planned for March, and teachers said it’s not always clear from one tool to another what data might be used to identify students.

For example, Jennifer Watters, a 3rd grade teacher at PS 29 in Queens, has been experimenting with AI since 2019 and said she regularly uses it for higher-order activities, such as developing questioning techniques for students from a wide variety of different cultures. However, Watters said data privacy concerns prompted her last month to switch from using OpenAI’s ChatGPT to Anthropic’s Claude—following the high-profile showdown in which Anthropic refused to allow the Pentagon to use its model for domestic surveillance.

“It’s really important that teachers know that this information and these tools, if gotten into the wrong hands, can be very dangerous for our students, for our profession, and for our jobs,” Watters said. “When we talk about our ethical obligations, privacy and student data, it’s super important that we abide by those things—but we need to know, do the AI abide by that, too?”

Teachers said the agents work best for tasks with clear rules, guidelines, and tone, and for which teachers can direct the AI to specific trusted sources. For example, some teachers developed agents that would write letters to parents about anything from upcoming field trips to academic challenges and discipline, which would be tailored to individual students, follow district and union guidelines on parent engagement, and include next steps based on appropriate research and resources.

However, some experts urge caution in offloading common teacher tasks like these to AI agents. For example, the letter-writing agent learns to reference details about particular families and mimic a teacher’s writing voice through examples of actual letters, but it can paradoxically make teachers less likely to remember important details about their students.

And teachers said they will need ongoing training and guidance to use the constantly evolving AI technology in the most effective and ethical ways.

“What we’re seeing nationally is, the more someone uses [AI], the less fearful they are of using it,” Weingarten said, but “there’s still a lot of fear in the absence of federal guardrails on privacy, on safety, on disinformation, on academic freedom.”